Client Background

The client is an American home furnishings manufacturer and retailer, headquartered in Arcadia, Wisconsin. It manufactures and distributes home furniture products all across the world through its different stores, independently owned and located in the US, Canada, Mexico, Japan, etc.

Objective

The primary goal of this project was to develop a platform that will help the client in streamlining its data analytics processes.

-

Country

USA -

Industry

Retail / FMCG -

Solution

Data Engineering & Analytics, Cloud, Google Cloud Platform, Power BI

Challenges

With 1000 retail stores comprising of 600 enterprises managed and 400 licensee managed stores alone in the US, The client is one of the largest furniture manufacturers in the world.

- Difficulty in identifying name-based customer data and its demographic view from two data sources viz. enterprise and licensee stores

- Collating asymmetric & unlinked data with diverse patterns into a common data set was a challenging task

- Errors in synchronizing customer data at Google cloud storage due to the lack of a centralized system in all the stores

- Absence of an accurate system to identify the customer buying pattern, spending capacity, product liking, and preferred stores, etc.

- Manual data ingestion resulted in typo errors and spelling mistakes

Talk to our data experts.

Solution

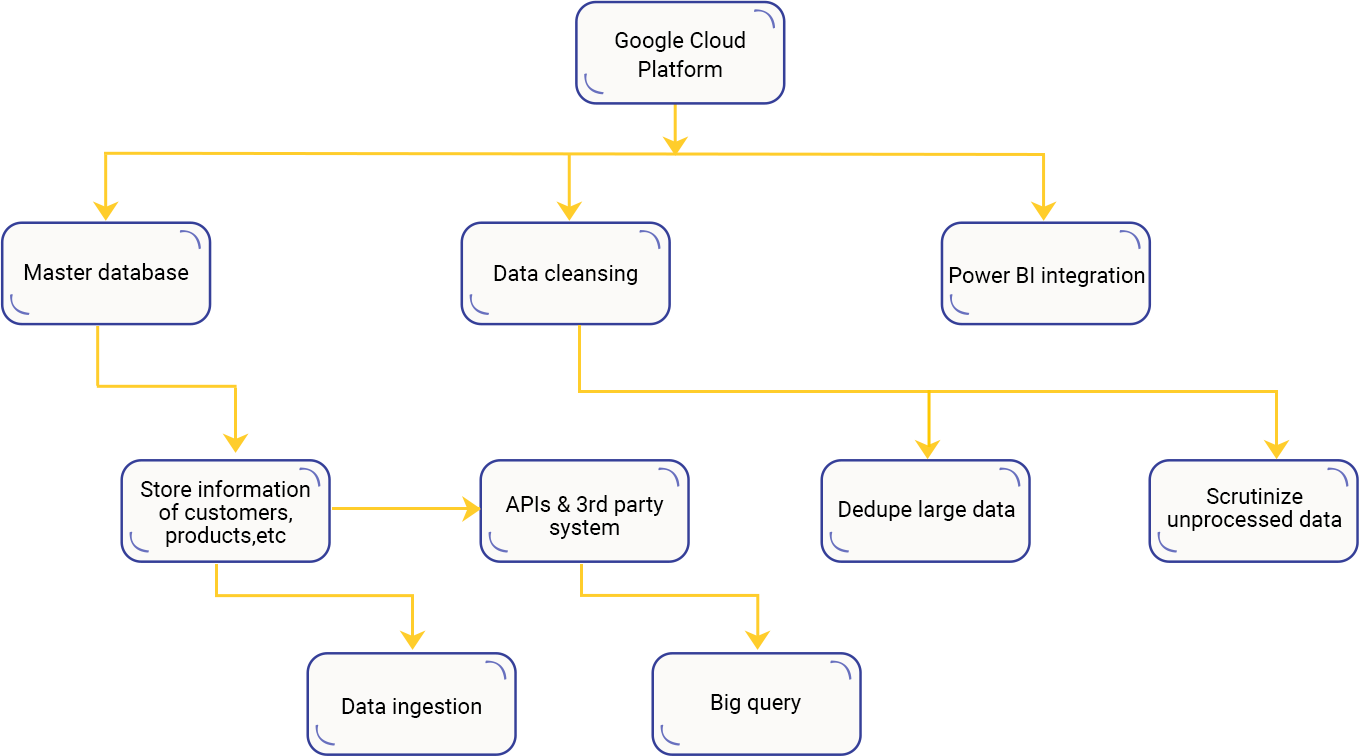

The current phase is the second one in which the individual components of the GCP platform and web frontend were developed as per the scope of the defined system. This solution phase is further classified into five parts which are mentioned below:

- We used dataflow for ingesting data from various data sources. With this, we created a master list of customers, products, and parts and used it during the data ingestion phase

- As huge data was collected at regular intervals, there was a need to make it quickly accessible through APIs and to the other 3rd party systems as well. We used Big Query to store entire processed data which could be accessed via APIs in the frontend systems

- The next step was data cleansing and processing which was necessary to dedupe large duplicate and unprocessed data that lacked proper information

- This was the most important phase of the solution. Using Google’s Data Studio platform, our team analyzed the key KPIs like single view customer, spend analysis, customer crossover and lifetime value, etc. Post analysis, we had a filtered database of the customer along with their purchasing behaviour roadmap

- We developed and deployed a frontend using node.js and react.js tools in which the authorized persons from marketing and sales could log in and access the customer single view and other important business-centric KPIs

- In many cases, we also cross-referred to the government records as well to ensure that there was no data duplicity and only the accurate data was recorded in the system

- We centralized and combined the BI reports which were further used by the client for the business endeavours

Project Highlights

Data Ingestion

Big Query & Data Studio

Data Storage

Data Cleansing & Processing

Data Analytics

Google Cloud Platform

KCS Approach

In order to simplify The client’s big data analytics operations, experts at KCS created a web platform with the help of Big Query and Data Studio. We used dataflow for ingesting data from various data sources. The data cleansing and processing were important to dedupe large duplicate and unprocessed data that leaked proper information. Using Google’s Data Studio platform, our team analyzed the key KPIs like single view customer, spend analysis, customer crossover and lifetime value, etc.

Outcome

- We created a web platform with authorized access to the users

- Rendered key deliverables through the outcomes of Google Data Analytics abetted the client in taking the right marketing/sales pitches & decisions

- Higher platform performance and sizing resulted in greater adoption & success rate

- Boundless record processing using the architecture on GCP, especially Big Query

- Quicker and reliable outcomes using Big Query for data storage and analytics

Tech Stack